Who Knows Where the Bones Are Buried?

AI agents can extend patterns in your codebase, but they cannot see the buried context behind them. That changes what teams need to preserve.

In most systems that have been around for a while, there is a decent amount of knowledge that never made it into the code.

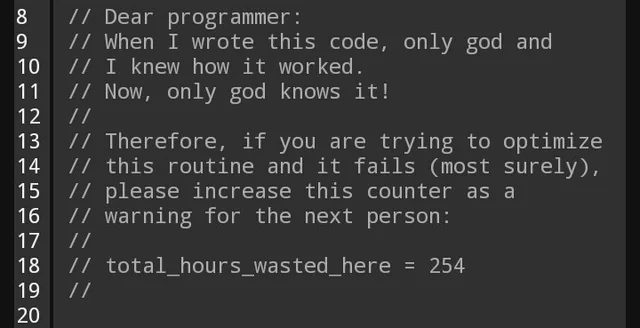

Someone remembers why a "clean" refactor was a bad idea. Someone knows which retry loop is not accidental, and which pattern should never be copied outside of this one place where it exists.

That knowledge has always mattered. The difference is that now we are asking AI agents to operate in codebases that were built on top of it.

The Problem Is Not That Agents Make Bad Local Decisions

Agents are often doing the reasonable thing. They see duplication and remove it. They see inconsistency and normalize it. They see a pattern in one service and reuse it in another.

If all you give them is the visible surface area of the system, those are usually defensible moves. The failure mode is not bad reasoning. It is missing context.

An agent does not know that the retry logic was added after a partial write corrupted downstream state. It does not know that the abstraction everyone wants to remove was the result of a painful migration that is only half-completed. It does not know that a "temporary" workaround survived because every attempt to replace it made latency or correctness worse.

So it treats the visible system as the whole system.

That is a safe assumption for a new graduate reading code for the first time. But, it is especially a dangerous assumption for something that can modify the codebase at a lightning speed.

Code Captures Outcomes, Not Rejected Paths

This is the part that matters most.

Code is very good at preserving what exists. It is much worse at preserving why it exists, what was tried before it, and what should not be repeated.

You will find all of these in any mature codebase:

- Patterns that keep repeating

- Logic that looks redundant

- Abstractions that feel (slightly) off

More often than not, these are signs of drift. But, sometimes these are remnants of real constraints. From the code alone, these two cases are easy to confuse.

That confusion is manageable when context lives in people. It gets riskier when the primary collaborator is an agent that reads the current state as truth and extends it ... without hesitation.

The Old Recovery Mechanism Was Human

This problem is not new. What is new is the recovery path.

Before AI agents, if something looked strange, you usually had three ways to recover the missing context:

- Ask the person who knew

- Dig through PRs, incident docs, and commit history

- Recreate the same failure and learn the reason the hard way

None of these are perfect. But they work because humans notice uncertainty. Good engineers slow down when a system feels inconsistent. They ask whether the inconsistency is accidental or intentional.

Agents usually do not. They are optimized to continue from available evidence. If the system does not surface the buried context, the agent does not pause and wonder what it might be missing.

I Am Already Seeing Small Versions of This

The pattern is subtle until it is not.

I have seen retry logic get "cleaned up" even though it was really a consistency guarantee. I have seen odd-looking patterns copied into new code even though they were only meant to isolate a temporary dependency failure. I am seeing teams preserve local structure while quietly reproducing global mistakes.

None of those changes look reckless in isolation. In fact, they often look thoughtful. That is exactly why this matters.

The issue is not that the agent ignored the code. The issue is that the code was never the full source of truth.

We Already Have Parts of the Answer

Some teams already produce artifacts that contain this missing context.

Architecture decision records (ADRs) explain why a path was chosen. Post-mortems explain how a system failed. Runbooks/Playbooks preserve operational constraints. PRs capture trade-offs while they are still fresh. One of these artifacts probably contains the most valuable sentence in the entire stack: we tried the obvious fix already, and it made things worse.

The problem is not that these artifacts do not exist. It is that they are operationally far away from the code they explain.

When a human works on a system, they can sometimes bridge that gap. They know where to search, who to ask, and which old document might matter. Agents do not have that instinct unless you build it into the environment.

What Needs to Change

The answer is not "write more docs." Most teams already have more documentation than they reliably use.

The answer is to make negative context more discoverable:

- Link decisions closer to the code they constrain.

- Preserve failed approaches, not just successful ones.

- Mark temporary patterns as temporary in a way that survives copy-paste.

- Expose what should not be repeated, not just what currently exists.

That last point is an important one. Most engineering systems are good at storing accepted decisions. They are much worse at storing rejected decisions. But rejected decisions are often where the real guardrails live.

If you do not preserve them, the next human may repeat the mistake slowly. The next agent will repeat it quickly.

A Simple Test

There is a simple test:

If someone new worked in this part of the system, would they understand what this code is protecting?

If the answer is no, then the system is preserving the implementation, but not the constraint behind it.

That is survivable in a human-only system. It is a real liability in a system where agents are increasingly doing implementation, cleanup, migration, and extension work on your behalf.

The Honest Trade-Off

Making buried context visible takes work.

You have to decide which constraints deserve durable explanation, which failures are worth preserving, and how close that context needs to live to the code. Not every edge case deserves an artifact.

But if a piece of context is important enough to stop a bad refactor, a broken migration, or the resurrection of a known failure mode, then it is important enough to preserve somewhere a human or an agent can actually find it.

Agents do not know where the bones are buried.